Efficient and Robust Parallel DNN Training through Model Parallelism on Multi-GPU Platform: Paper and Code - CatalyzeX

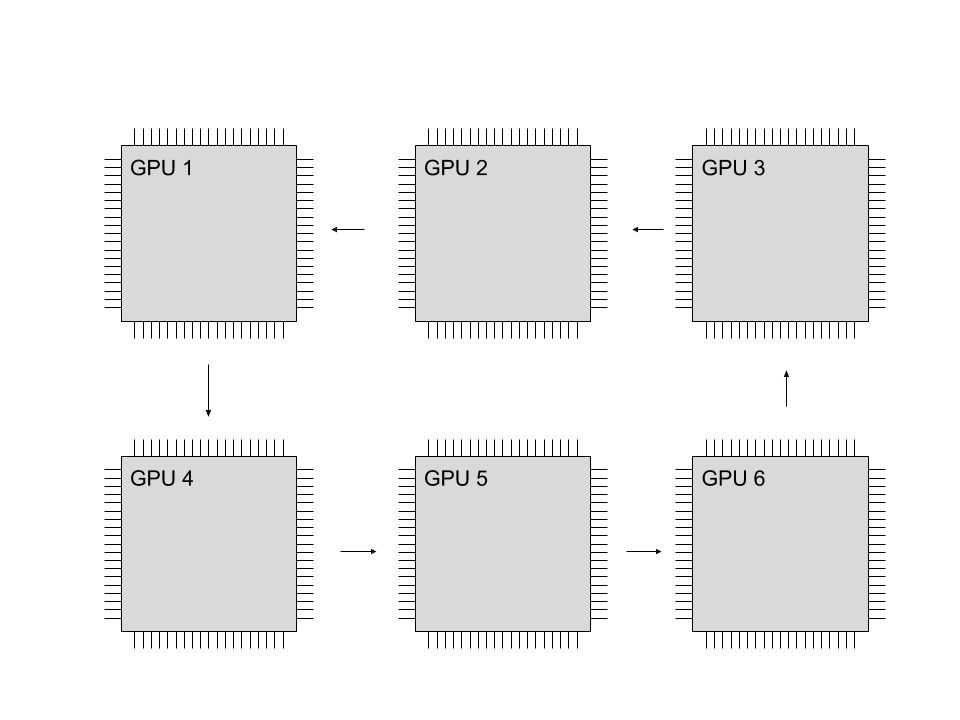

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

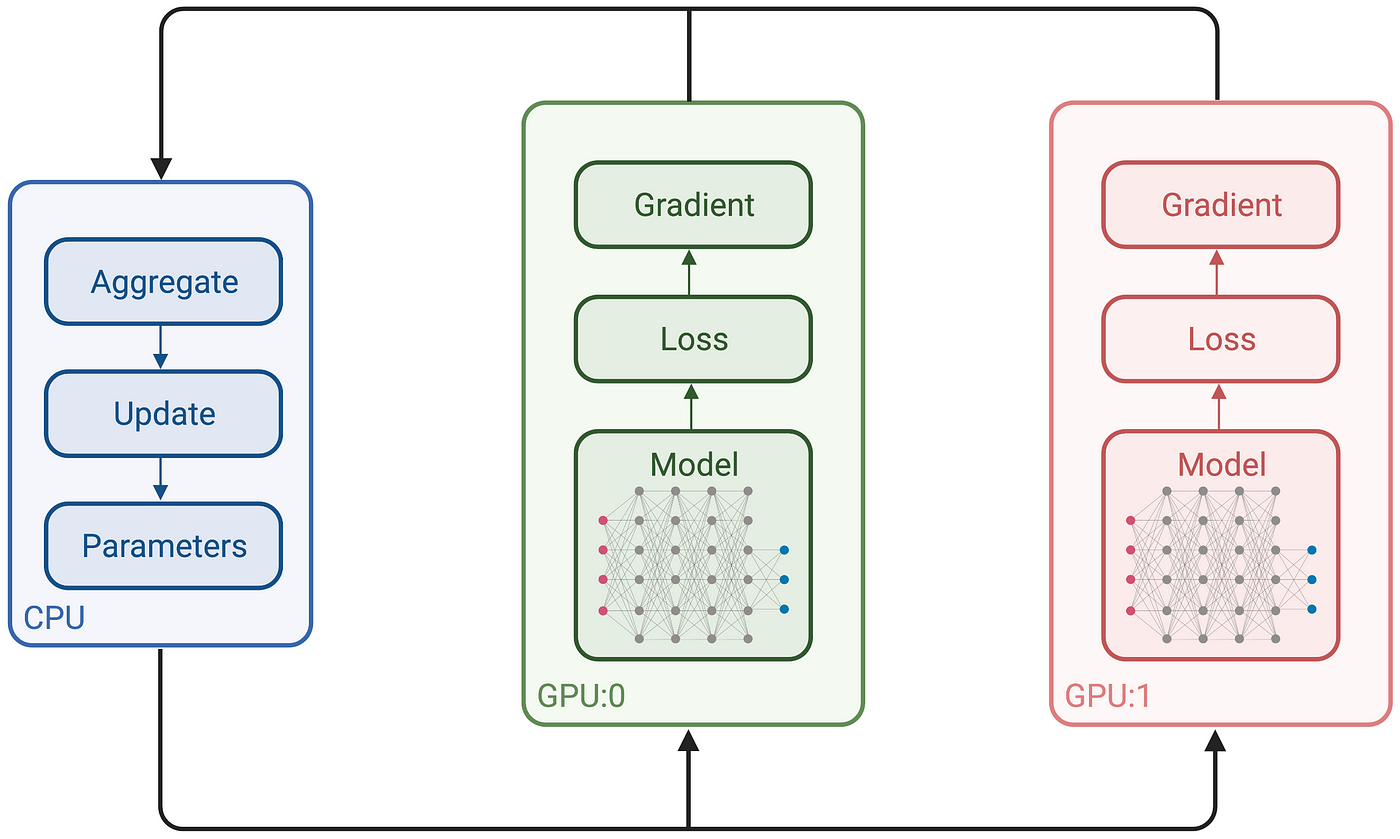

Multi-GPU training. Example using two GPUs, but scalable to all GPUs... | Download Scientific Diagram

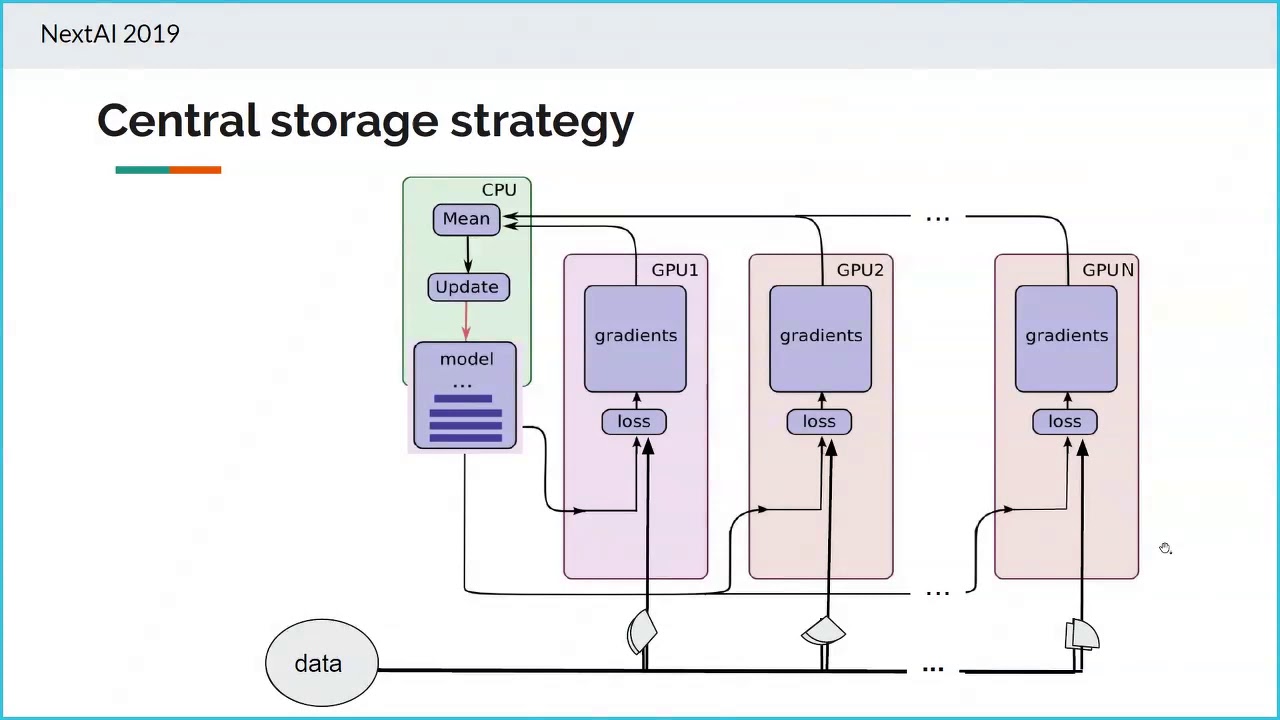

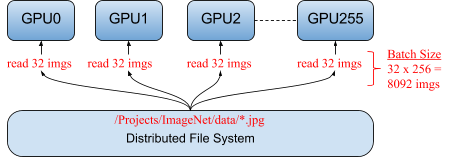

Multi-GPU and distributed training using Horovod in Amazon SageMaker Pipe mode | AWS Machine Learning Blog

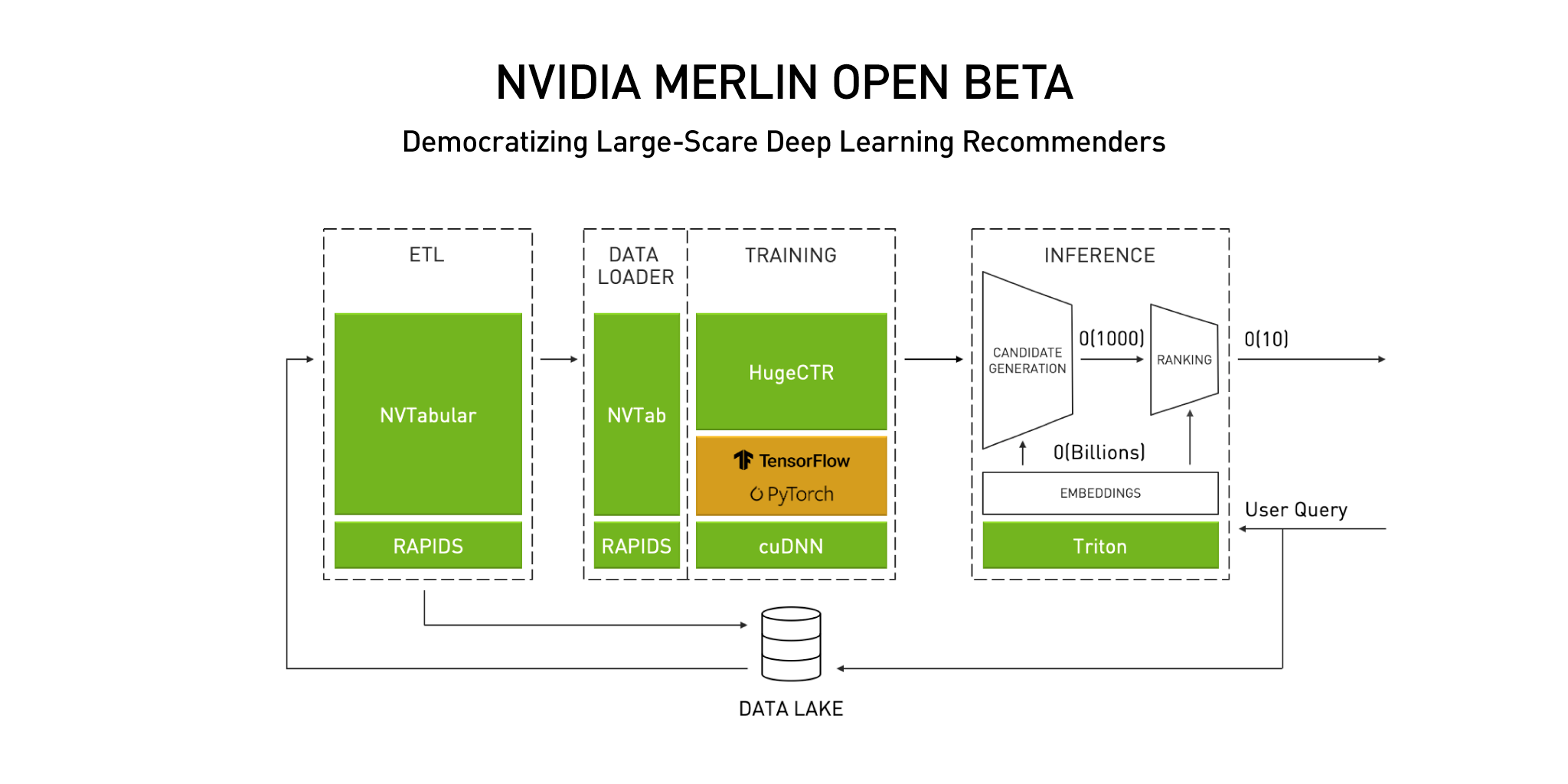

Announcing the NVIDIA NVTabular Open Beta with Multi-GPU Support and New Data Loaders | NVIDIA Technical Blog

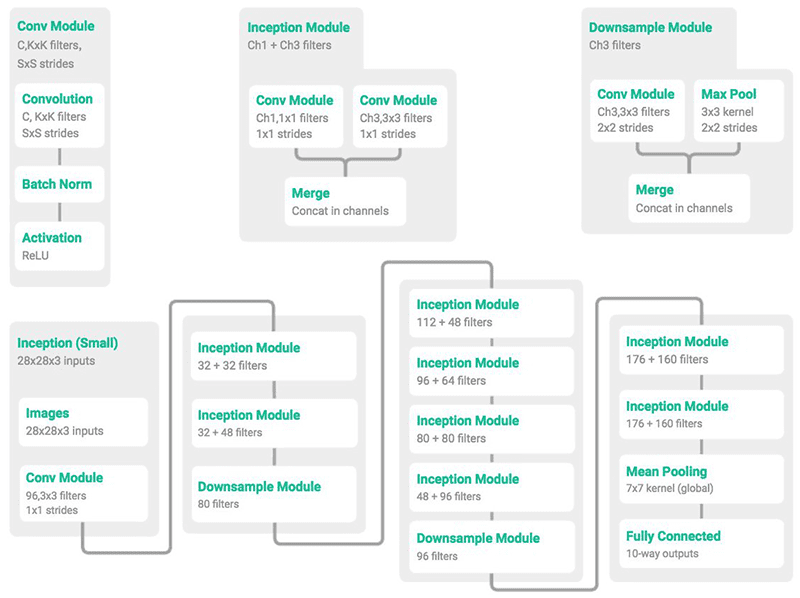

![PDF] Iteration Time Prediction for CNN in Multi-GPU Platform: Modeling and Analysis | Semantic Scholar PDF] Iteration Time Prediction for CNN in Multi-GPU Platform: Modeling and Analysis | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/55fd0eefc23f262c2875ec4c1c3472a689d88c50/3-Figure1-1.png)